Since then, an increasing number of community partners have helped us create a multitude of language systems, such as Hmong Daw, Urdu, Swahili, Mayan, Otomí, Māori, and Inuktitut. Our engagement with community partners started in 2010, when we coordinated with the disaster response community to build a translation system for Haitian Creole within 10 days of Haiti’s devastating earthquake. They then evaluate the quality of the resulting machine translation models. These community partners, often volunteers working with their respective communities, painstakingly collect bilingual sentences by consulting with community members and elders. We are fortunate to work with partners in language communities who have access to human-translated texts and can help us gather data for under-resourced languages. For many languages, this bilingual data is hard to acquire, especially for digitally low-resourced or endangered languages. This data is comprised of high-quality human-translated content both in the language we want to add and in one of the languages the service already supports. One of the biggest challenges when adding new languages is obtaining enough bilingual data needed to train and produce a machine translation model. A collective effort in data gathering and evaluation Using multilingual transformer architecture, we could now augment training data with material from other languages, often in the same or a related language family, to produce models for languages with small amounts of data -commonly referred to as low-resource languages.Įven with all that technology available, it’s essential to have a body of digital documents available in the target language together with its translation in another already-included language-commonly referred to as parallel documents.įigure 1: The adoption of neural machine translation (NMT) technology in 2016 helped us increase the quality of translation, and transformer architecture, adopted in 2019, helped us build models for low resource languages. While NMT technology significantly increased overall translation quality, the advent of transformer architecture paved new ways for creating machine translation models, enabling training with smaller amounts of material than before.

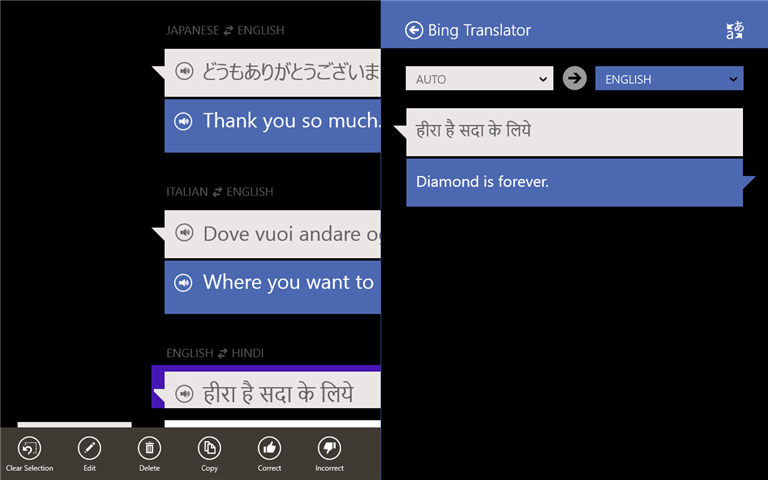

As artificial intelligence (AI) technology evolved, we adopted neural machine translation (NMT) technology and migrated all machine translation systems to neural models based on transformer technology, achieving massive gains in translation fluency and accuracy. Over the years, we added translation systems for many of the world’s most spoken languages. Microsoft evolved the systems further based on statistical machine translation (SMT) models and made it available to the public through Windows Live Translator, the Translator API, and as a built-in function in Microsoft Office applications. In 2003, a machine translation system translated the entire Microsoft Knowledge Base from English to Spanish, French, German, and Japanese, and the translated content was published on our website, making it the largest public-facing application of raw machine translation on the internet at the time. Microsoft Research first developed machine translation systems over 20 years ago.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed